#they will be used for the field names in the csv file #first pass through json data collect all collumn names Also, the script only handles string data, because that's what is in the example data. It uses the ijson library to incrementally read the json file. I used StringIO for testing, you'll want to change StringIO to open (e.g., with open(.) as f). The script that does two passes over the json file. The script provides an outline you can change the two passes to get what you want. I suspect this still isn't what you want, because each unique data element gets its own column in the csv file.

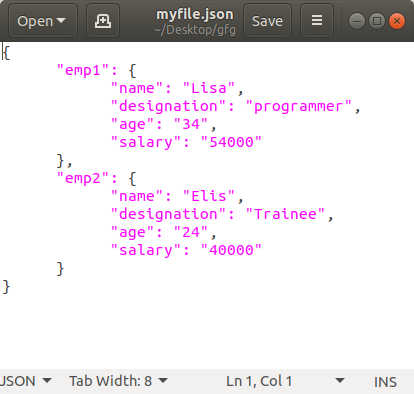

That didn't seem to make much sense, so here's a script that creates a new csv row for each top-level object in the json file. Your script seems to create a one row csv file with each data element having a separate column. PS: I've done the above in Python 3.8.2 so I'd like you to focus on a version of Python >= 3.6 I'm specifically looking for a review orientated on memory optimizations which probably comes with a cost of a slower running time (that's fine) but any other overall improvements are welcome! The CSV for the above JSON will look like this:.I have to write the data to the CSV file as described above.As far I saw, the JSON data will be at most 7 level-nested so we could have something like:.I can't change the structure of the JSON file.I don't know where the JSON file comes from so I have to stick with it and process it as is.Write_json_to_csv(flattened_json, CSV_PATH) W = csv.DictWriter(out_file, flattened_json.keys())įlattened_json = flattenjson(json_data, '.') Of the csv and the values.well, the values ^_^.įlattened_json (dict): Flattened JSON object. The JSON file that I'm trying to convert is 60 GB and I have 64GB of RAM.įlatten a simple JSON by prepending a delimiter to nested children.ĭelim (str): Delimiter for nested children (e.g: '.')ĭef write_json_to_csv(flattened_json, csv_path): (perhaps not load everything at once into memory - even if it's gonna be slower). I'd like to improve the performance of my solution as much as possible. As you can see, my solution is not memory efficient since I'm reading all the file into memory. I won't describe the functionality in too much detail since I have reasonable docstrings for that. Simply take a JSON file as input and convert the data in it into a CSV file.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed